2024 SCIEN Industry Affiliates Distinguished Poster Award

| Company | Poster | |

|---|---|---|

| Title: Multifunctional Spaceplates for Chromatic and Spherical Aberration Correction Abstract: Spaceplates are nonlocal optical devices with the potential to reduce the form factor of optical systems, but current implementations are limited in performance and ability to correct for optical aberrations. We introduce a new class of multifunctional spaceplates, designed using gradient-based freeform optimization, which exhibit exceptional efficiencies, compression ratios, and aberration correcting capabilities. We show that such spaceplates can serve as correctors for metasurfaces and refractive optical elements, and we demonstrate that multifunctional spaceplates can extend to support multispectral, Petzval field curvature, and spherical aberration correction. We anticipate that these spaceplate concepts will enable the realization of ultracompact optical systems. Authors: Yixuan Shao, Robert Lupoiu, Tianxiang Dai, Jiaqi Jiang, You Zhou, Jonathan A. Fan Bio: Yixuan Shao is a 4th year phd student in Electrical Engineering. He is doing research on photonic optics design under the supervision of Prof. Jonathan Fan. |  |

Blue River Technology | Title: Orthogonal Adaptation for Multi-concept Fine-tuning of Text-to-Image Diffusion Models Abstract: Customization techniques for text-to-image models have paved the way for a wide range of previously unattainable applications, enabling the generation of specific concepts across diverse contexts and styles. While existing methods facilitate high-fidelity customization for individual concepts or a limited, pre-defined set of them, they fall short of achieving scalability, where a single model can seamlessly render countless concepts. In this paper, we address a new problem called Modular Customization, with the goal of efficiently merging customized models that were fine-tuned independently for individual concepts. This allows the merged model to jointly synthesize concepts in one image without compromising fidelity or incurring any additional computational costs. To address this problem, we introduce Orthogonal Adaptation, a method designed to encourage the customized models, which do not have access to each other during fine-tuning, to have orthogonal residual weights. This ensures that during inference time, the customized models can be summed with minimal interference. Our proposed method is both simple and versatile, applicable to nearly all optimizable weights in the model architecture. Through an extensive set of quantitative and qualitative evaluations, our method consistently outperforms relevant baselines in terms of efficiency and identity preservation, demonstrating a significant leap toward scalable customization of diffusion models. Authors: Ryan Po, Guandao Yang, Kfir Aberman, Gordon Wetzstein Bio: Po is a PhD student at Stanford University working with Prof. Gordon Wetzstein at the Stanford Computational Imaging Lab. My research lies in 3D content generation/reconstruction. |  |

| Title: Predicting human eye movements Abstract: A model that accurately predicts typical eye movement paths during visual search has many applications, such as optimizing resource allocation in AR/VR imaging systems, enhancing video game and web-based advertisement design, and aiding in neurological diagnostics. Prominent models of visual search are based on the idea that people create an internal template that approximates the target, which they use to guide their search behavior (Najemnik & Geisler, 2005). These templates are often assumed to be ideal, in the sense that they perfectly match the target’s appearance. We tested this assumption using a novel method that allowed us to estimate the template from a person’s eye movement during search tasks. In both highly controlled and naturalistic scenes we found that the human-derived templates differ from those of an ideal searcher. We are further exploring the range of templates across individuals and task contexts with the ultimate goal of building a more accurate model of human eye movements. Authors: Hyunwoo Gu, Justin Gardner Bio: Hyunwoo Gu is a third-year Ph.D. student at Stanford University, advised by Prof. Justin Gardner. His research focuses on modeling human visual behaviors by integrating classical visual psychophysics with recent advancements in vision-language models and diffusion models. |  |

| Title: Full-color 3D holographic augmented-reality displays with metasurface waveguides Abstract: Emerging spatial computing systems seamlessly superimpose digital information on the physical environment observed by a user, enabling transformative experiences across various domains, such as entertainment, education, communication and training. However, the widespread adoption of augmented-reality (AR) displays has been limited due to the bulky projection optics of their light engines and their inability to accurately portray three-dimensional (3D) depth cues for virtual content, among other factors. We will discuss a holographic AR system that overcomes these challenges using a unique combination of inverse-designed full-color metasurface gratings, a compact dispersion-compensating waveguide geometry, and artificial-intelligence-driven holography algorithms. These elements are co-designed to eliminate the need for bulky collimation optics between the spatial light modulator and the waveguide and to present vibrant, full-color, 3D AR content in a compact device form factor. To deliver unprecedented visual quality with our prototype, we developed an innovative image formation model that combines a physically accurate waveguide model with learned components that are automatically calibrated using camera feedback. Our unique co-design of a nanophotonic metasurface waveguide and artificial-intelligence-driven holographic algorithms represents a significant advancement in creating visually compelling 3D AR experiences in a compact wearable device. Authors: Manu Gopakumar*, Gun-Yeal Lee*, Suyeon Choi, Brian Chao, Yifan Peng, Jonghyun Kim, Gordon Wetzstein Bio: Gun-Yeal is a postdoctoral researcher at Stanford University, working with Professor Gordon Wetzstein at the Stanford Computational Imaging Lab. He is broadly interested in Optics and Photonics, with a particular focus on nanophotonics and optical system engineering. His recent research at the intersection of optics and computer vision focuses on developing next-generation optical imaging, display, and computing systems, utilizing advanced photonic devices and AI-driven algorithms. Gun-Yeal completed his PhD at Seoul National University in 2021 under the guidance of Professor Byoungho Lee. For his undergraduate studies, he double-majored in Electrical and Computer Engineering and Physics, also at Seoul National University. He is a recipient of OSA Incubic/Milton Chang Award, SPIE Optics and Photonics Education Scholarship, OSA Emil-wolf Award finalist, and NRF postdoc fellowship. Manu is a 5th year PhD candidate in the Electrical Engineering Department at Stanford University working with Professor Gordon Wetzstein at the Stanford Computational Imaging Lab. His research interests are centered on the co-design of optical systems and computational algorithms. More specifically, he is currently focused on utilizing novel computational algorithms to unlock high quality 3D and 4D holography and more compact form-factors for holographic displays. Prior to coming to Stanford, Manu received a Bachelor’s and Master’s degree in Electrical and Computer Engineering from Carnegie Mellon University during which he worked with Pulkit Grover and Aswin Sankaranarayanan |  |

|

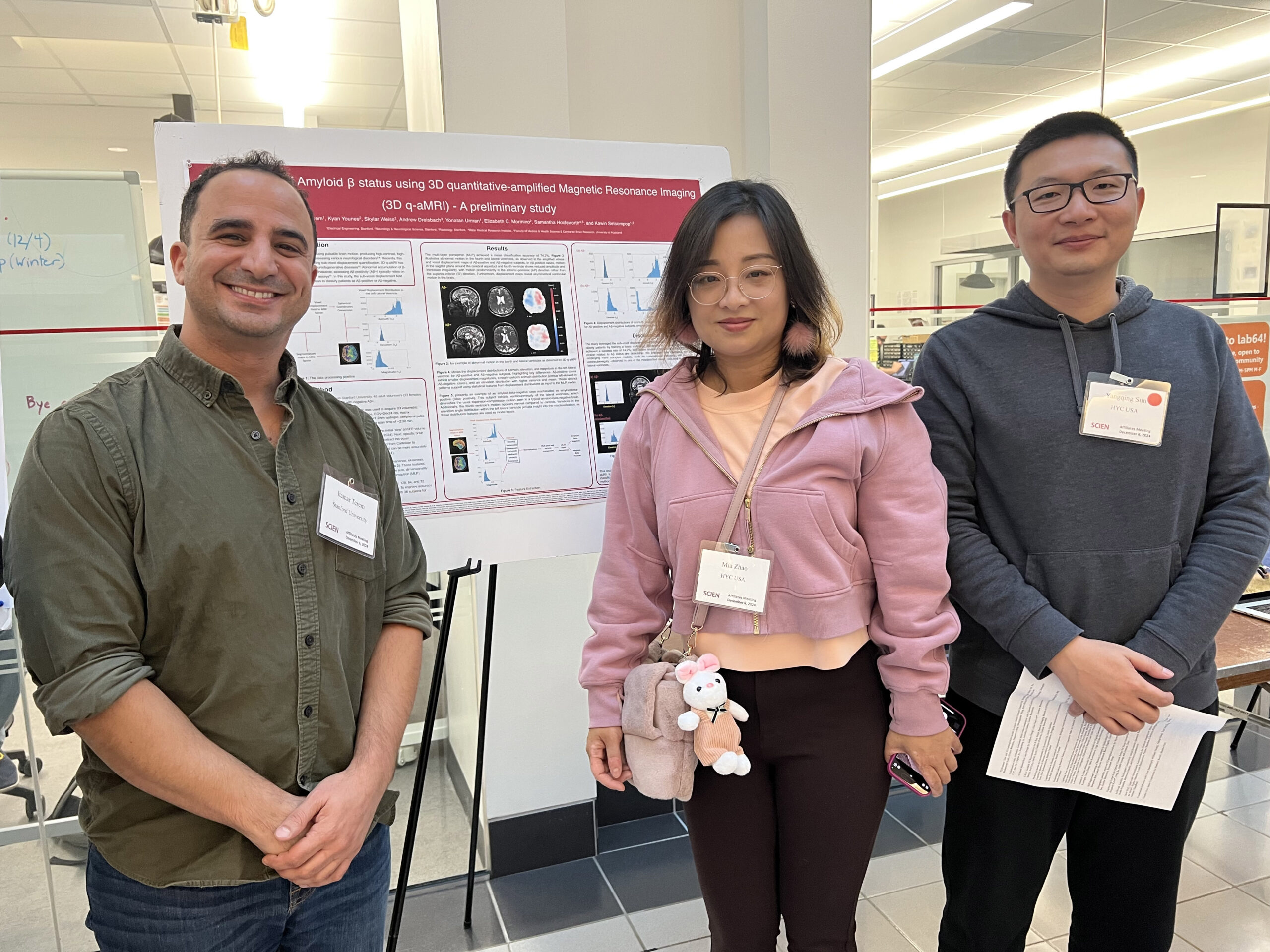

| Title: Classification of amyloid β status using 3D quantitative-amplified Magnetic Resonance Imaging (3D q-aMRI) – A preliminary study Abstract: Amplified Magnetic Resonance Imaging (aMRI) is a method for visualizing pulsatile brain motion, producing high-contrast, high-temporal-resolution ‘videos’ that have shown promise as a tool in assessing various neurological disorders. Recently, this approach was advanced with 3D quantitative aMRI (q-aMRI), enabling sub-voxel displacement quantification. 3D q-aMRI has shown promise in detecting abnormal brain motion in patients with neurodegenerative diseases. Abnormal accumulation of β-amyloid (Aβ) in the brain is an early indicator of Alzheimer’s disease. However, assessing Aβ status typically relies on invasive procedures such as PET scans or cerebrospinal fluid (CSF) assays. In this study, the sub-voxel displacement field generated by 3D q-aMRI was used as input to a multi-layer perceptron to classify patients as Aβ+ or Aβ-. Authors: Itamar Terem, Kyan Younes , Skylar Weiss, Andrew Dreisbach, Yonatan Urman, Elizabeth C. Mormino, Samantha Holdsworth, and Kawin Setsompop Bio: Itamar Terem is a PhD candidate in the Department of Electrical Engineering at Stanford University and an NSF Graduate Research Fellow. His current research focuses on developing computational and acquisition techniques in Magnetic Resonance Imaging to explore pulsatile brain dynamics and their potential as biomarkers for various neurological conditions, tissue mechanical response and clearance mechanician to blood pulsation and cerebrospinal fluid (CSF) motion |  |

|

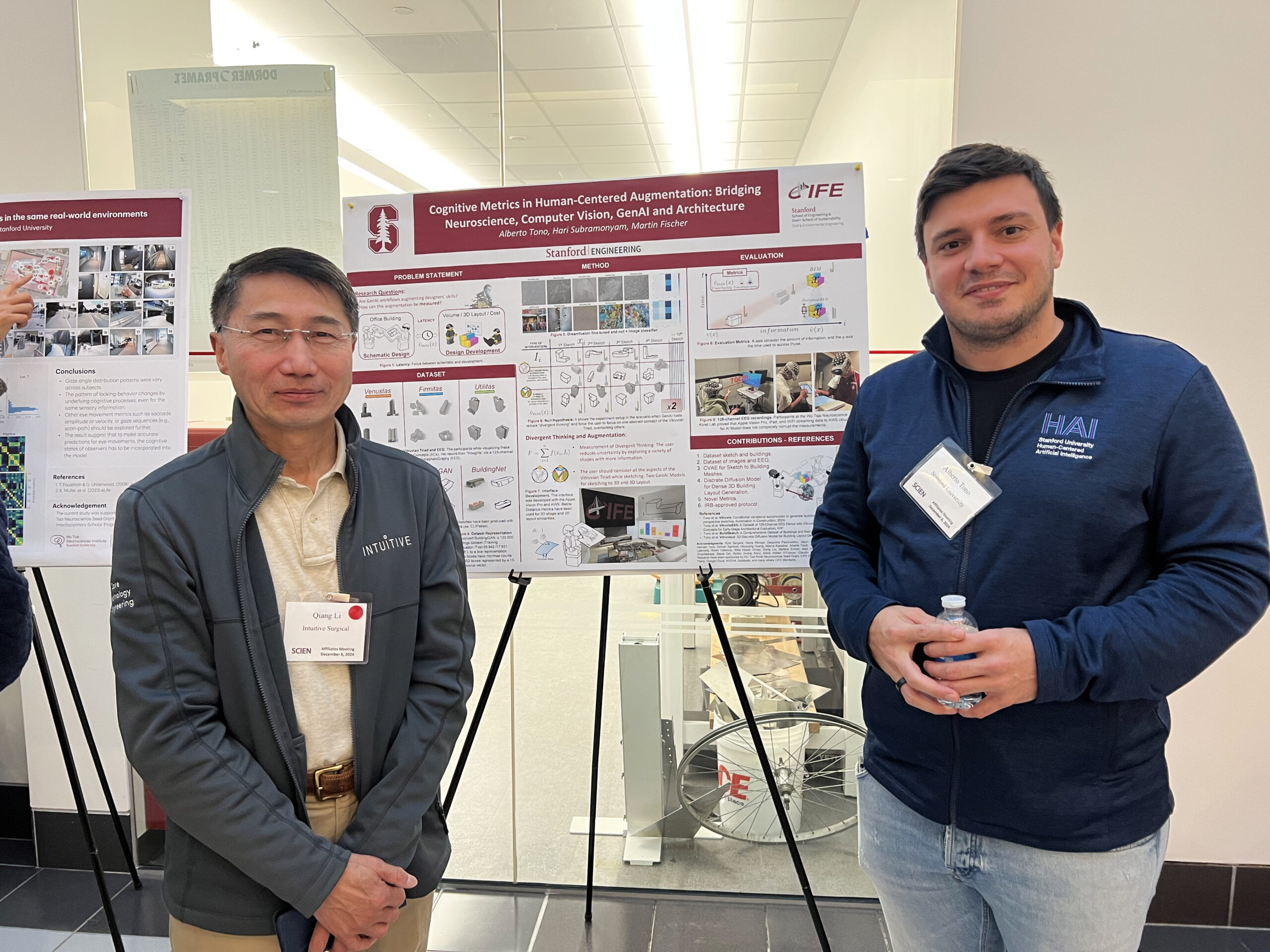

| Title: Cognitive Metrics in Human-Centered Augmentation: Bridging Neuroscience, Computer Vision, and AEC Abstract: Vitruvius articulated that an optimal designer should consider firmitas (strength), utilitas (utility), and venustas (aesthetics) during the initial design phases. Therefore, how do we objectively measure this consideration at the early stage of the project, setting the project on the right track? More importantly, how do we assess if novel 3D GenAI tools allow considering these three aspects during these initial phases, augmenting the designer’s skills? This research aims to answer whether these novel 3DGenAI tools augment human designer skills. We introduce a dataset, two 3D GenAI methods, and an IRP-approved protocol to test a novel 3D GenAI interface. This research can inform Brain-Computer Interfaces (BCIs) that identify these design elements and highlight overlooked factors, helping set projects on a successful path early in the design process. Authors: Alberto Tono, Hari Subramonyam, Martin Fischer Bio: Tono Alberto is a current Ph.D. Candidate at Stanford University under the supervision of Kumagai Professor Martin Fischer in Civil Environmental Engineering. Furthermore, he is the founder of the Computational Design Institute, where he is exploring ways in which the convergence between Digital and Humanities can facilitate cross-pollination between different industries. Following this mission, he became a Stanford HAI Graduate Fellow researching Human-Centered AI solutions for augmenting and amplifying human capabilities with the design process. |  |

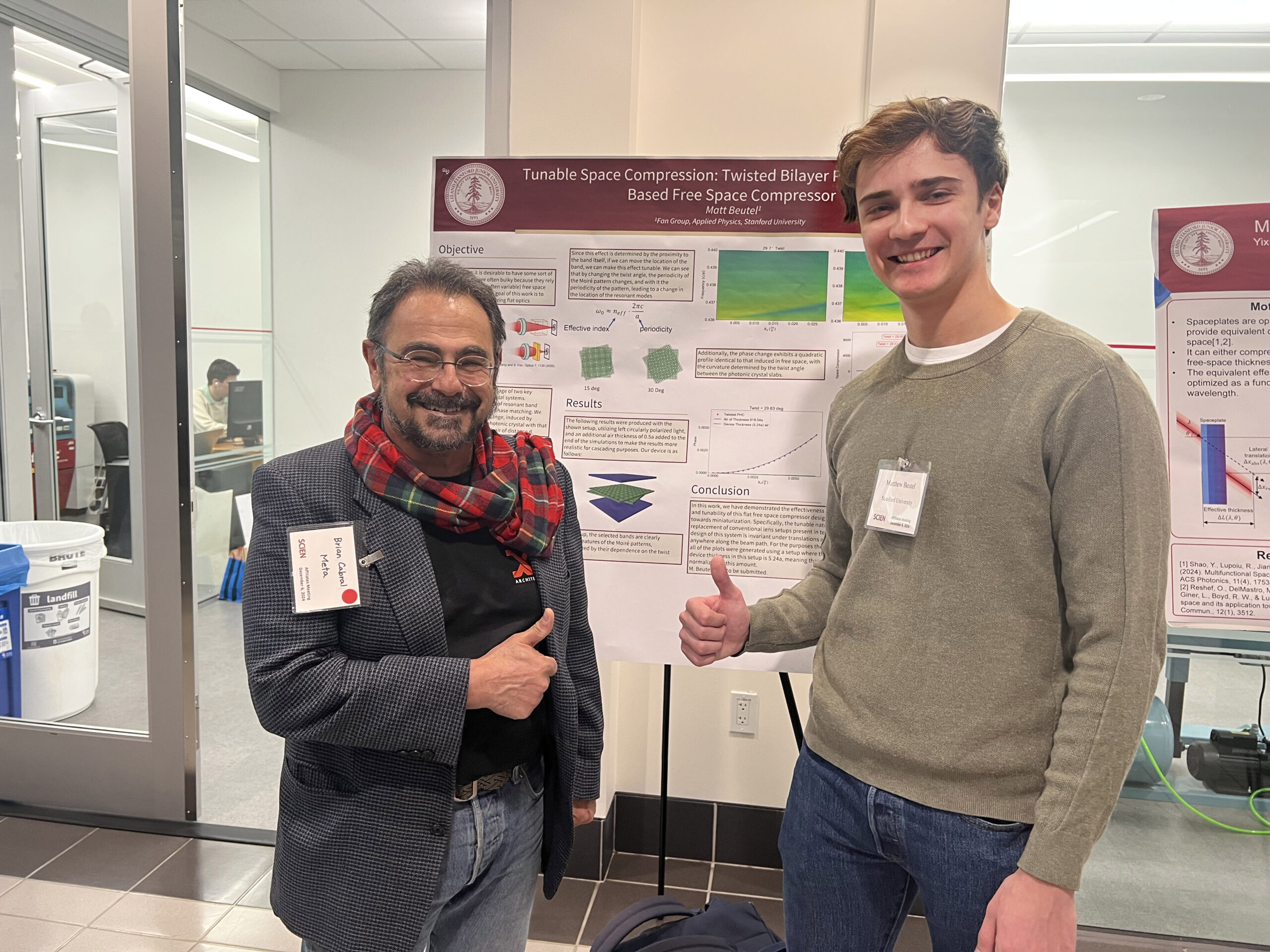

| Title: Tunable Free Space Compression Abstract: With the goal of miniaturization, there has been significant interest in the use of flat optics to shrink free space. Due to the momentum-dependent transfer function of free space, local optics are ruled out. In our work, we show that not only can free space be replaced with non-local flat optics in the momentum domain, but that it can be done in a tunable manner. We describe the operating principle and through the use of twisted bilayer photonic crystal systems and Moire periodicity, provide a specific setup that accomplishes this function. The operating function of this device is one that is key in the miniaturization of many present-day technologies. Authors: Matthew Beutel, Shanhui Fan Bio: Matthew Beutel is a second-year Applied Physics PhD student. He is advised by Shanhui Fan and is working on photonic crystal device design. |  |

|

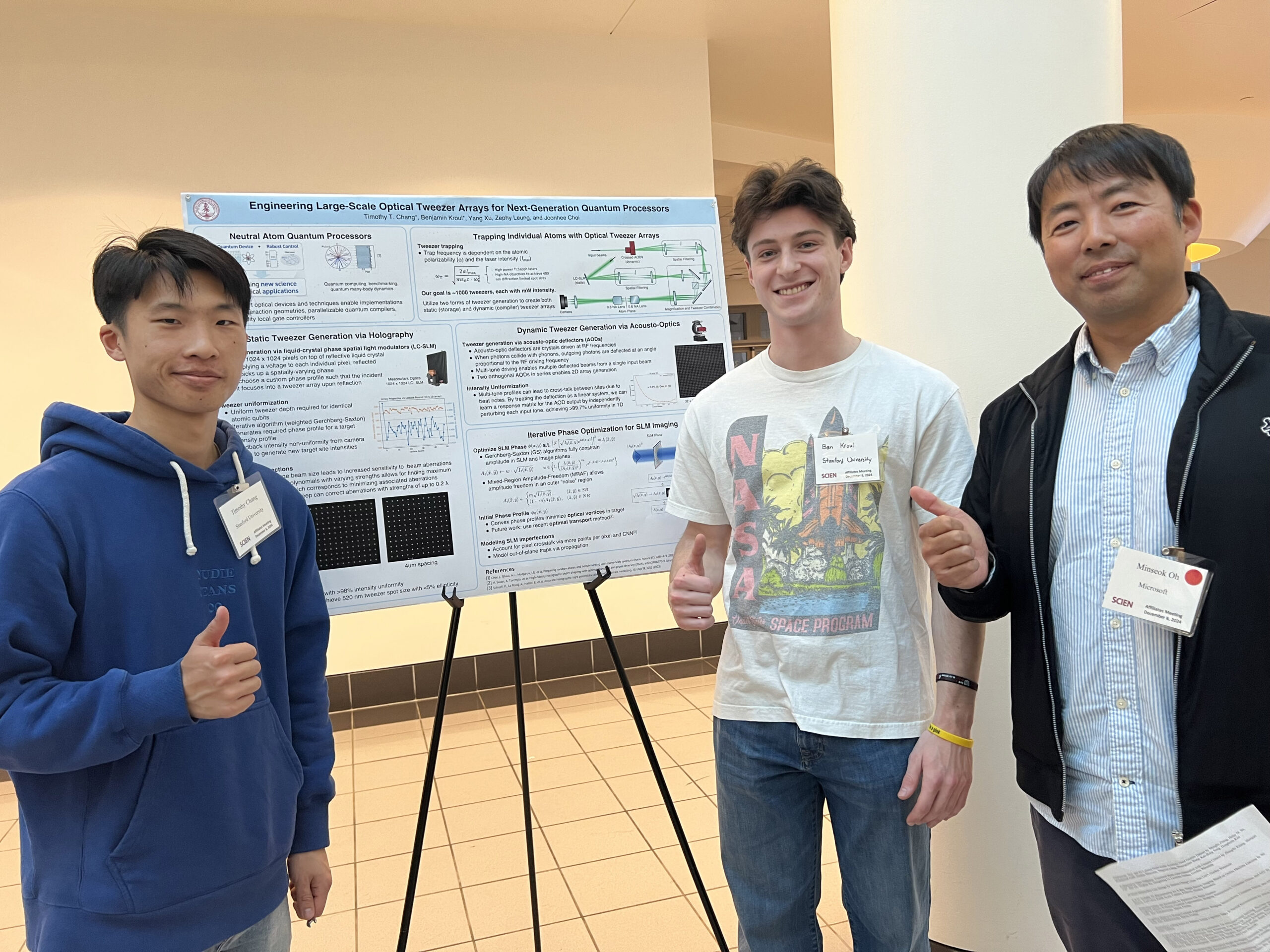

| Title: Engineering Large-Scale Optical Tweezer Arrays for Next-Generation Quantum Processors Abstract: Advances in quantum computing and simulation herald a revolutionary approach to solving computationally intensive problems that have long remained intractable for classical computers. In recent years, arrays of neutral atoms trapped by optical tweezers have emerged as a groundbreaking architecture for quantum information processing, offering exceptional scalability and programmable interactions. Foundational work in optical phase engineering and acousto-optics have been especially instrumental in developing atom-based quantum processors, enabling state-of-the-art implementations of arbitrary interaction geometries, parallelizable quantum compilers, and high-fidelity local gate controllers. In this work, we present progress towards generating both static and dynamic arrays of up to 1024 optical tweezers through a high-NA objective, using a Spatial Light Modulator (SLM) and crossed Acousto-Optic Deflectors (AODs), respectively. We demonstrate preliminary work for optimizing intensity uniformity, correcting aberrations, and developing in-house phase engineering solutions to address key optical challenges in high-density tweezer arrays. Authors: Timothy Chang*, Benjamin Kroul*, Yang Xu, Zephy Leung, and Joonhee Choi Bio: Timmy Chang and Ben Kroul are building utility-scale quantum processors for both benchmarked analog quantum simulation and fault-tolerant quantum computation, advised by Prof. Joonhee Choi in the Electrical Engineering department. Timmy is a second-year PhD student in EE, and Ben is a co-term student in Applied Physics. |  |

| Title: AI-based Metasurface Lens Design Abstract: Conventional optical imaging systems are bulky and complex, requiring multiple elements to correct aberrations. Optical metasurfaces, planar structures that are capable of manipulating light at subwavelength scales, are compact alternatives to conventional refractive optical elements. Their miniature volume is suitable for technologies like AR/VR displays and wearables. However, existing metalenses face significant challenges from monochromatic (e.g., coma) and chromatic aberrations, limiting their applicability. Here we present an end-to-end AI-based computational method that parametrizes the profile of metalenses and optimizes it based on customized loss functions. This innovation enables wide-angle imaging with corrected aberrations while retaining a single-layer form factor, overcoming the key limitations of existing metalenses and advancing their potential for miniaturized imaging systems. Authors: Jiazhou Cheng*, Gun-Yeal Lee*, Gordon Wetzstein Bio: Jiazhou “Jasmine” Cheng: I am a first-year PhD student supervised by Prof. Gordon Wetzstein, interested in computational imaging technologies. |  |

| Title: Collaborative Video Diffusion: Consistent Multi-video Generation with Camera Control Abstract: Research on video generation has recently made tremendous progress, enabling high-quality videos to be generated from text prompts or images. Adding control to the video generation process is an important goal moving forward and recent approaches that condition video generation models on camera trajectories make strides towards it. Yet, it remains challenging to generate a video of the same scene from multiple different camera trajectories. Solutions to this multi-video generation problem could enable large-scale 3D scene generation with editable camera trajectories, among other applications. We introduce collaborative video diffusion (CVD) as an important step towards this vision. The CVD framework includes a novel cross-video synchronization module that promotes consistency between corresponding frames of the same video rendered from different camera poses using an epipolar attention mechanism. Trained on top of a state-of-the-art camera-control module for video generation, CVD generates multiple videos rendered from different camera trajectories with significantly better consistency than baselines, as shown in extensive experiments. Authors: Zhengfei Kuang, Shengqu Cai*, Hao He, Yinghao Xu, Hongsheng Li, Leonidas Guibas, Gordon Wetzstein Bio: Zhengfei Kuang is a third-year Ph.D. student at the Stanford University, primally advised by Prof. Gordon Wetzstein. His main research interests are neural rendering, 3D/4D reconstruction and generation and video diffusion models. Before Stanford, he was studying at the University of Southern California (advised by Prof. Hao Li) and Tsinghua University. |  |

|

| Title: Self-Supervised Learning of Motion Concepts by Optimizing Counterfactuals Abstract: A major challenge in representation learning from visual inputs is extracting information from the learned representations to an explicit and usable form. This is most commonly done by learning readout layers with supervision or using highly specialized heuristics. This is challenging primarily because the pretext tasks and the downstream tasks that extract information are not tightly connected in a principled manner—improving the former does not guarantee improvements in the latter. The recently proposed counterfactual world modeling paradigm aims to address this challenge through a masked next frame predictor base model, which enables simple counterfactual extraction procedures for extracting optical flow, segments, and depth. In this work, we take the next step and parameterize and optimize the counterfactual extraction of optical flow by solving the same simple next frame prediction task as the base model. Our approach, Opt-CWM, achieves state-of-the-art performance for motion estimation on real-world videos while requiring no labeled data. This work sets the foundation for future methods on extraction of more complex visual structures like segments and depth with high accuracy. Authors: Stefan Stojanov, David Wendt, Seungwoo Kim, Rahul Mysore Venkatesh, Kevin Feigelis, Jiajun Wu, Daniel LK Yamins Bio: Stefan Stojanov is a postdoctoral researcher working with Professors Jiajun Wu and Daniel Yamins. He is interested in building computer vision systems guided by our knowledge about the generalization, adaptability, and efficiency of human perception and its development. Stefan completed his PhD at the Georgia Institute of Technology, where he worked on self-supervised and data-efficient computer vision algorithms. |  |

| Title: CoT-VLA: Visual Chain-of-Thought Reasoning for Vision-Language-Action Models Abstract: Vision-language-action models (VLAs) have shown potential in leveraging pretrained vision-language models and diverse robot demonstrations for learning generalizable sensorimotor control. While this paradigm effectively utilizes large-scale data from both robotic and non-robotic sources, current VLAs primarily focus on direct input–output mappings, lacking the intermediate reasoning steps crucial for complex manipulation tasks. As a result, existing VLAs lack temporal planning or reasoning capabilities. In this paper, we introduce a method that incorporates explicit visual chain-of-thought (CoT) reasoning into vision-language-action models (VLAs) by predicting future image frames autoregressively as visual goals before generating a short action sequence to achieve these goals. We introduce CoT-VLA, a state-of-the-art 7B VLA that can understand and generate visual and action tokens. Our experimental results demonstrate that CoT-VLA achieves strong performance, outperforming the state-of-the-art VLA model by 17% in real-world manipulation tasks and 6% in simulation benchmarks. Authors: Qingqing Zhao, Yao Lu, Moo Jin Kim, Zipeng Fu, Zhuoyang Zhang, Yecheng Wu, Max Li, Qianli Ma, Song Han, Chelsea Finn, Ankur Handa, Donglai Xiang, Gordon Wetzstein, Ming-Yu Liu, Tsung-Yi Lin Bio: Qingqing Zhao is a final year Ph.D. student in Electrical Engineering at Stanford Computational Imaging Lab advised by Prof. Gordon Wetzstein. She is interested in foundation models for perception, control, and modeling. |  |